Clearview AI used nearly 1m times by US police, it tells the BBC

Facial recognition firm Clearview has run nearly a million searches for US police, its founder has told the BBC

CEO Hoan Ton-That also revealed Clearview now has 30bn images scraped from platforms such as Facebook, taken without users’ permissions.

The company has been repeatedly fined millions of dollars in Europe and Australia for breaches of privacy.

Critics argue that the police’s use of Clearview puts everyone into a “perpetual police line-up”.

“Whenever they have a photo of a suspect, they will compare it to your face,” says Matthew Guaragilia from the Electronic Frontier Foundation says. “It’s far too invasive.”

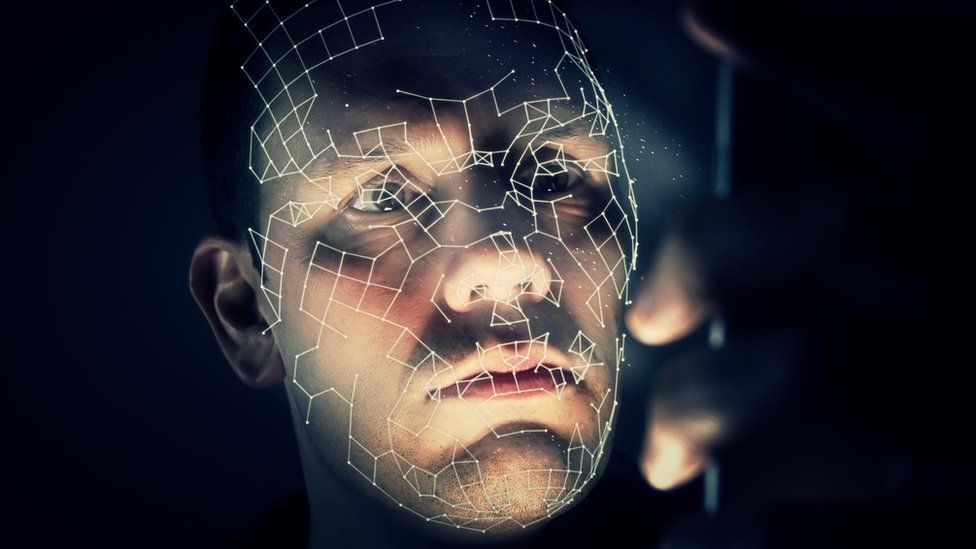

Clearview’s system allows a law enforcement customer to upload a photo of a face and find matches in a database of billions of images it has collected.

It then provides links to where matching images appear online. It is considered one of the most powerful and accurate facial recognition companies in the world.

The company is banned from selling its services to most US companies, after the American Civil Liberties Union (ACLU) took Clearview AI to court in Illinois for breaking privacy law.

But there is an exemption for police, and Mr Ton-That says his software is used by hundreds of police forces across the US.

Police in the US do not routinely reveal whether they use the software, and it is banned in several US cities including Portland, San Francisco and Seattle.

The use of facial recognition by the police is often sold to the public as only being used for serious or violent crimes.

In a rare interview with law enforcement about the effectiveness of Clearview, Miami Police said they used the software for every type of crime, from murders to shoplifting.

Assistant Chief of Police Armando Aguilar said his team used the system about 450 times a year, and that it had helped solve several murders.

However, critics say there are almost no laws around the use of facial recognition by police.

Mr Aguilar says Miami police treats facial recognition like a tip. “We don’t make an arrest because an algorithm tells us to,” he says. “We either put that name in a photographic line-up or we go about solving the case through traditional means.”

Mistaken identity

There are a handful of documented cases of mistaken identity using facial recognition by the police. However, the lack of data and transparency around police use means the true figure is likely far higher.

Mr Ton-That says he is not aware of any cases of mistaken identity using Clearview. He accepts police have made wrongful arrests using facial recognition technology, but attributes those to “poor policing”.

Clearview often points to research that shows it has a near 100% accuracy rate. But these figures are often based on mugshots.

In reality, the accuracy of Clearview depends on the quality of the image that is fed into it – something Mr Ton-That accepts.

Civil rights campaigners want police forces that use Clearview to openly say when it is used – and for its accuracy to be openly tested in court. They want the algorithm scrutinised by independent experts, and are sceptical of the company’s claims.

Kaitlin Jackson is a criminal defense lawyer based in New York who campaigns against the police’s use of facial recognition.

“I think the truth is that the idea that this is incredibly accurate is wishful thinking,” she says. “There is no way to know that when you’re using images in the wild like screengrabs from CCTV.”

However, Mr Ton-That told the BBC he does not want to testify in court to its accuracy.

“We don’t really want to be in court testifying about the accuracy of the algorithm… because the investigators, they’re using other methods to also verify it,” he says.

Mr Ton-That says he has recently given Clearview’s system to defense lawyers in specific cases. He believes that both prosecutors and defenders should have the same access to the technology.

Last year, Andrew Conlyn from Fort Myers, Florida, had charges against him dropped after Clearview was used to find a crucial witness.

Mr Conlyn was the passenger in a friend’s car in March 2017 when it crashed into palm trees at high speed.

The driver was ejected from the car and killed. A passerby pulled Mr Conlyn from the wreckage, but left without making a statement.

Although Mr Conlyn said he was the passenger, police suspected he had been driving and he he was charged with vehicular homicide.

His lawyers had an image of the passerby from police body cam footage. Just before his trial, Mr Ton-That allowed Clearview to be used in the case.

“This AI popped him up in like, three to five seconds,” Mr Conlyn’s defence lawyer, Christopher O’Brien, told the BBC. “It was phenomenal.”

The witness, Vince Ramirez, made a statement that he had taken Mr Conlyn out of the passenger’s seat. Shortly after, the charges were dropped.

But even though there have been cases where Clearview is proven to have worked, some believe it comes at too high a price.

“Clearview is a private company that is making face prints of people based on their photos online without their consent,” says Mr Guaragilia.

“It’s a huge problem for civil liberties and civil rights, and it absolutely needs to be banned.”

Viewers in the UK can watch the Our World documentary into Clearview AI on BBC iPlayer

Related Topics

- Facial recognition

- Artificial intelligence

-

What is facial recognition?

-

5 August 2020

-

-

How AI is helping to identify the dead in Ukraine

-

13 April 2022

-

Published at Mon, 27 Mar 2023 22:44:57 +0000